How AI Can Help Companies Avoid Bias in Hiring

authors are vetted experts in their fields and write on topics in which they are extremely knowledgeable. All of our content is peer reviewed and validated by world-class professionals.

AI has become indispensable to the hiring process. According to a Harvard Business School study, 99% of all Fortune 500 companies and 75% of all companies in the US are using AI in hiring; around 45% of companies globally are using AI to improve recruitment and human resources.

“These tools let you process a lot more résumés,” Dan Costa, Chief Content and Product Officer at Clarim Media, and former Editor-in-Chief of PCMag.com, tells Staffing.com. “As we are now moving into a decentralized workforce, you could be getting résumés for a job in New York from all over the planet. But you can't read all those résumés. AI in the hiring process can help solve the volume problem.”

Beyond its ability to manage the sheer number of résumés that recruiters are fielding, “AI is a powerful tool to actually take the hiring bias out of both résumés and job descriptions,” Rajamma Krishnamurthy, Senior Director HR Technology at Microsoft, tells Staffing.com. AI hiring tools can remove or change names, phrases, or anything on a résumé that indicates a person’s race or gender and could potentially cause bias. “We can easily train a bot with AI to sweep it and say, ‘bring a clean job résumé to the table,’” says Krishnamurthy.

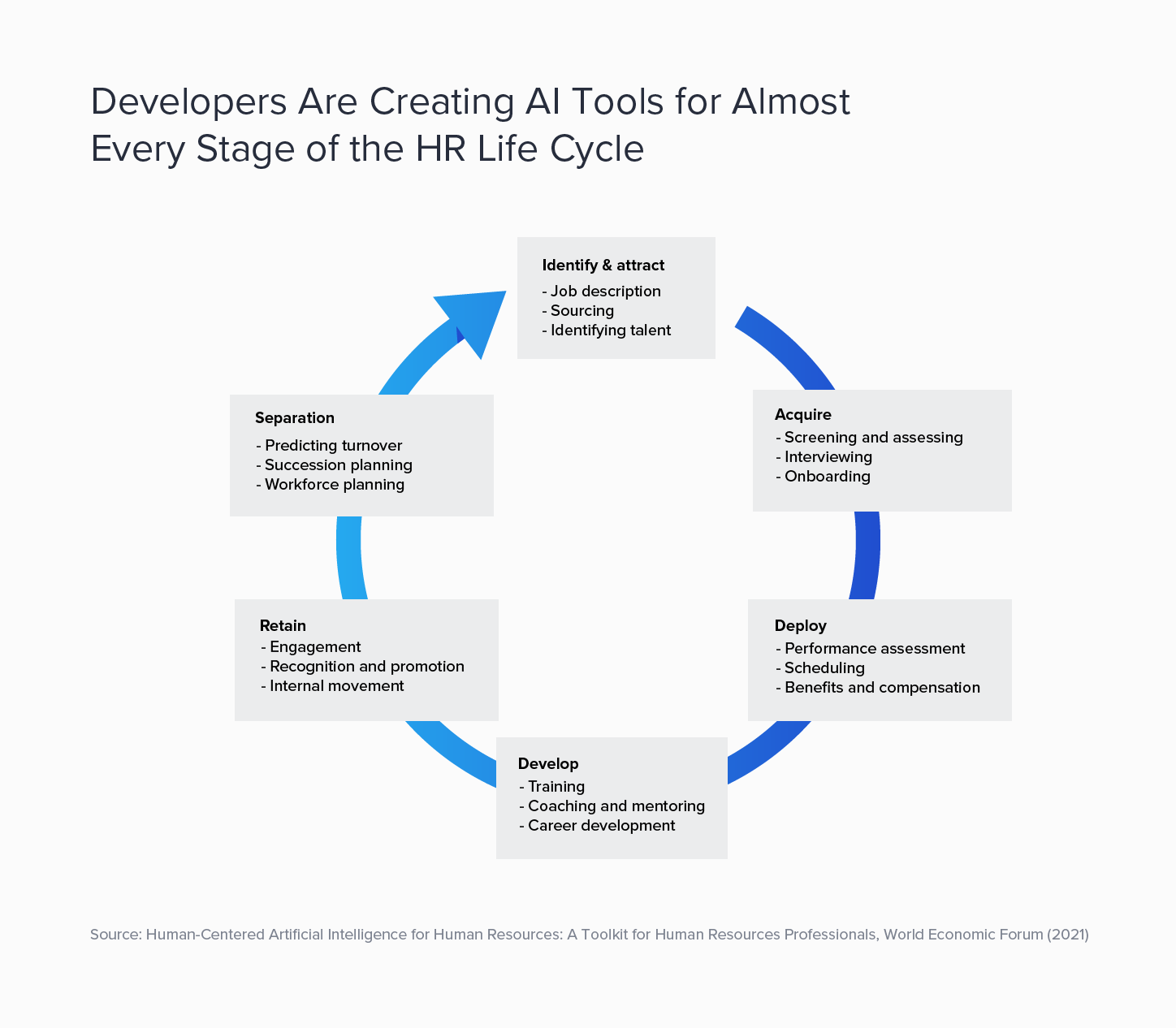

There are now more than 250 commercial AI-based HR tools that help streamline processes, according to a World Economic Forum report. Today’s AI hiring software can analyze and predict countless aspects of a potential hire, including word choice, speech patterns, facial expressions, social media presence, and personality traits.

The challenge for companies buying or developing such software is that AI is still an evolving technology that can introduce the types of biases it was meant to avoid: The same AI-enabled software that companies use to find diverse candidates can also discriminate, and replacing human hiring biases with an AI hiring bias is not an option.

How to Avoid Bias in Hiring With AI

According to the HBS study, 88% of employers say that qualified candidates are rejected because they don’t match the exact criteria established by the job description.

When Amazon created an AI-enhanced hiring tool designed to speed up the résumé selection process for technical positions, the company discovered that the tool favored men over women.

To avoid AI bias in hiring, companies must create or implement the least biased algorithm possible, says Frida Polli, co-founder and CEO of Pymetrics, a company that offers AI-enhanced digital tools for recruitment and lists Boston Consulting Group, Swarovski, and Colgate-Palmolive amongst the companies that are using their AI in HR. “When you're building a hiring algorithm, it's actually in federal employment law that you should be essentially looking for the least biased alternative,” Polli says, meaning any algorithm you build should produce equal outcomes for men and women, and for people of different ethnic backgrounds.

When the law was created in 1968, it didn’t factor in machine learning. Today, however, when a company or organization uses AI to search for the least biased alternative, it is creating an unbiased algorithmic solution while simultaneously documenting that practice.

Companies and HR leaders should also put in place a responsive plan when using AI in hiring: In 2020, the Federal Trade Commission offered guidance on how companies can reduce bias, including recommendations for transparency, accuracy, fairness, disclosure, and accountability. This involves examining inputs and testing outcomes to rectify the disparate impact of AI hiring tools on protected classes.

AI Audits: Setting Standards As New Technologies Emerge

In response to criticism that AI discriminates against protected groups, the US Justice Department and Equal Employment Opportunity Commission warned employers that using AI digital tools that may discriminate against people with disabilities violates the Americans with Disabilities Act (ADA). In May 2022, the EEOC filed an algorithmic discrimination case against an English-language tutoring service, alleging it had programmed its online recruitment software to automatically reject older applicants.

State and local governments are reacting too: In 2019, the Illinois General Assembly passed the Artificial Intelligence Video Interview Act, which applies to employers using AI hiring tools to analyze video interviews of applicants for positions based in the state. This includes the following requirement: “An employer that relies solely upon an artificial intelligence analysis of a video interview to determine whether an applicant will be selected for an in-person interview must collect and report the following demographic data: (1) the race and ethnicity of applicants who are and are not afforded the opportunity for an in-person interview after the use of artificial intelligence analysis; and (2) the race and ethnicity of applicants who are hired.”

A New York City law that goes into effect in 2023 “prohibits an employer or employment agency from using an automated employment decision tool in making an employment decision unless, prior to using the tool, the following requirements are met: (1) the tool has been subject to a bias audit within the last year; and (2) a summary of the results of the most recent bias audit and distribution data for the tool have been made publicly available on the employer or employment agency’s website.”

These regulations are a step in the right direction, says Costa. “A lot of times there's nothing to look back on and say ‘this is why these candidates are moving through and these are not.' It's based on the internal dynamics and the pattern matching, which is why these biases can be hard to detect.” But when companies are forced to conduct an audit of hiring outcomes, they can easily identify the disparities therein. Those who create AI algorithms may be able to program around those issues, Costa says, but companies must be aware of the problem to begin solving it.

Understanding the Options With AI Hiring Bias

Although overcoming AI bias isn’t simple, the technology is easier to correct than human error. “Digital tools leave a trace and therefore they're easier to audit and fix,” Polli says. “It's not that these tools are inherently worse or better. But they do make it easier to understand what's happening.” Once the AI industry and regulators understand the processes’ shortcomings, they can develop auditing best practices.

Until then, Polli says it’s important to focus on the possibilities. “Sadly, the hype around the dangers really undermines the fact that some of these new technologies can actually improve compliance.” It has proved successful in other sectors. For example, banks that experience high false-positive rates in their compliance systems can now rely on AI to autonomously categorize compliance-related activities and alert them to important activities.

Krishnamurthy notes that AI isn’t a blanket solution for everyone. “Companies need to figure out if they even need to use AI in hiring. If the answer is yes, then they should ask where and how they want to use it,” she says.

Krishnamurthy recommends that HR executives consult the following websites, which provide suggested questions for AI vendors, interactive tools to help users evaluate the technology, and information for staying current on changing technology standards. “They can give you a better understanding of how other companies are using AI platforms,” says Krishnamurthy.

- Data & Trust Alliance brings together leading businesses and institutions from multiple industries to develop responsible data and AI practices. It published an Algorithmic Bias Safeguards for Workforce report, which includes a downloadable PDF that provides questions for vetting AI vendors.

- Partnership on AI is a non-profit group comprising academic, industry, and media organizations that are committed to ensuring that AI hiring is sensitive and responsive to the people who bear the highest risk of bias, error, or exploitation. The group’s goal is to develop new ways “to identify, understand, analyze, and ensure these systems—and those developing and deploying them—are accountable.”

- AI Now Institute, which was founded in 2017 and is part of New York University, produces interdisciplinary research to “measure and understand the effects of AI in society; work with those directly impacted by the use of AI to shape standards and practices that mitigate harm and inform just AI deployment; and help shape a rigorous and inclusive field focused on these issues.”

- Data & Society, a non-profit research organization, is committed to the notion that “empirical evidence should directly inform the development and governance of new technology.” The group studies “the social implications of data and automation, producing original research to ground informed, evidence-based public debate about emerging technology.”

Staying current on the work of these organizations puts HR executives in the driver’s seat when it comes to ensuring an equitable hiring process without AI bias, says Krishnamurthy. “If an AI vendor comes to you and says that your hiring speed will increase by X amount, you’re ready; you can ask them where their data is coming from.” Vendors might not be willing to share specifics about their algorithms, but they should be able to answer questions about their technology and their process. A thoughtful and deliberate approach to implementing AI hiring tools will ensure that potential pitfalls are avoided, says Krishnamurthy. “These decisions should not be made based solely on the speed to hire. They have to be based on fairness.”